What are these AI art generators we’ve been hearing about?

With the rise of AI art generator apps, social media feeds and the news have been flooded with AI generated art. But what actually is an AI art generator? How does it work? Should we be using them?

In this article, we’ll take a look at what AI art generators are, how they work, some of the controversies surrounding them, and what the future may hold.

What is an AI art generator?

An AI art generator is a computer program designed to create artwork using artificial intelligence.

AI art generators are capable of creating artwork in a variety of styles, including 2D imagery, 3D models, and even ground-breaking works that combine both types.

Using deep learning algorithms, AI art generators can analyze existing pieces of artwork to generate new pieces with similar characteristics.

AI art generators are becoming increasingly popular with consumers, especially with Lensa AI‘s new “magic avatars” feature. Fantastical images of people have begun popping up all over social media.

How does an AI art generator work?

Artificial intelligence is only as good as the data it’s been trained with. These systems have to be taught over and over with examples. Specifically, AI art generation models are a type of foundation model known as diffusion models.

An AI art generator is trained with billions of images. The system is designed to recognize patterns and characteristics in the images, and it uses these observations to generate new pieces of artwork.

An AI system recognizes patterns and characteristics in images by analyzing the pixels in the image. By comparing the pixels in a new image to those in a database of training images, the AI system can identify similarities and generate a new piece of artwork that is similar to the original.

The AI art generator might be able to determine the general composition of a painting, such as the color palette or subject matter. It can also analyze smaller details, like brush strokes and texture. With this information, it can generate new artwork with similar characteristics.

AI art generators have been used to create everything from abstract paintings and murals to realistic portraits and landscapes.

Popular AI art generators

Generative art AI takes a lot of time and training data to create. Three of the most successful AI art generators are DALL-E, Midjourney, and Stable Diffusion, which are built off of diffusion model technology.

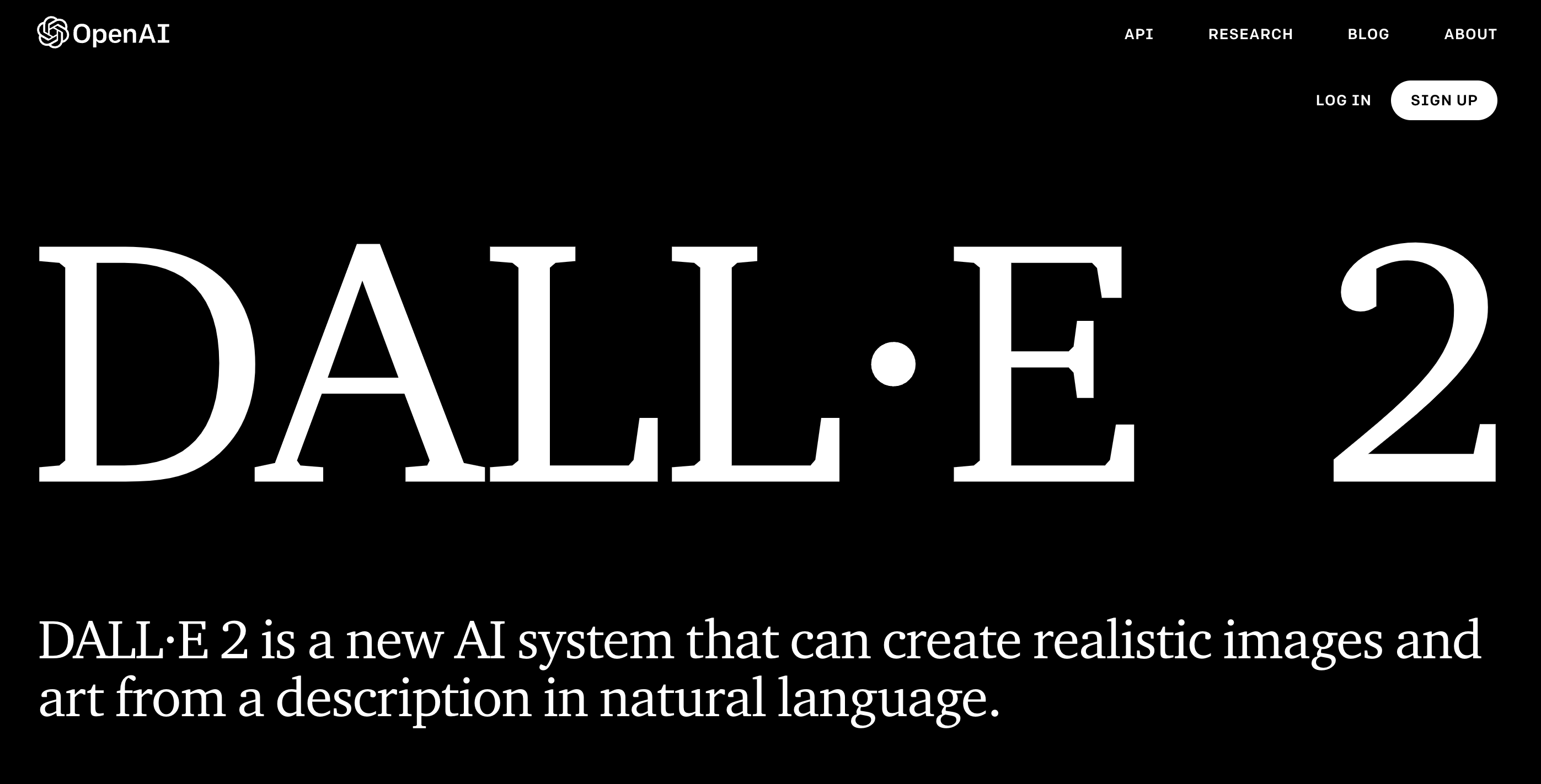

DALL-E

In January 2021, OpenAI released DALL-E. A year later, they released the second version, DALL-E 2, which does a better job at matching the prompts you give it. However, they are both advanced AI image generator machine learning models that has learned the relationship between images and the text used to describe the images.

The creators of DALL-E 2 say: “Our hope is that DALL·E 2 will empower people to express themselves creatively. DALL·E 2 also helps us understand how advanced AI systems see and understand our world, which is critical to our mission of creating AI that benefits humanity.”

DALL-E 2 was created by training a neural network of images and their text descriptions. Through deep learning, this AI drawing generator not only understands individual objects such as koala bears and motorcycles, but learns from relationships between objects. So if you ask DALL-E 2 to create an image of a koala bear riding a motorcycle, it knows that the koala bear needs to be on top of the motorcycle and holding its handlebars.

DALLE-2 uses a process called “diffusion” which starts with a pattern of random dots and gradually alters that pattern towards an image when it recognizes specific aspects of that image.

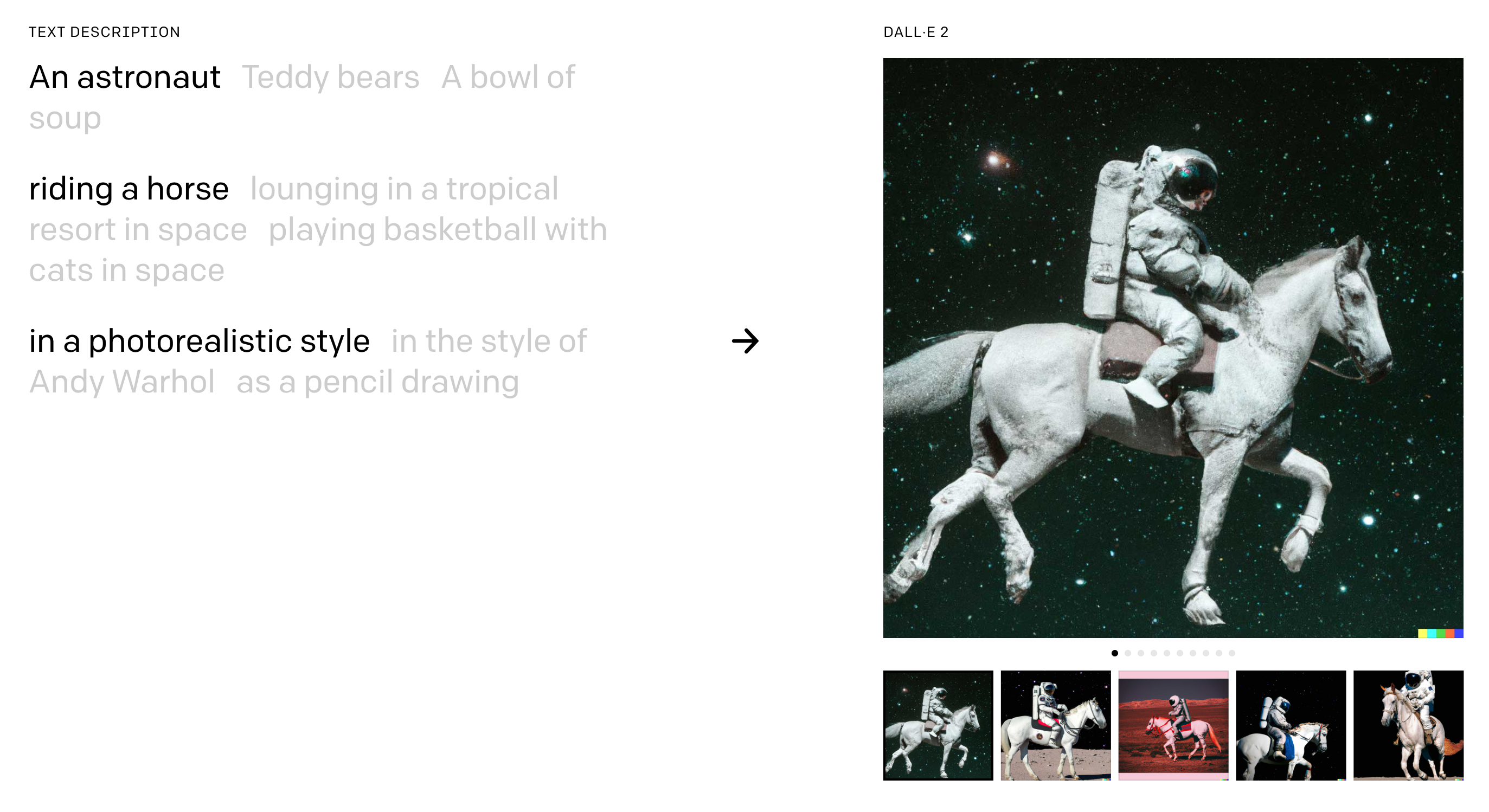

As a user, you would either give DALL-E 2 a prompt, such as “an astronut riding a horse in a photrealistic style” (as shown in the example below) and DALL-E 2 will generate AI art based off your prompt.

DALLE-2 can also accept image uploads and create AI generated art variations inspired by the uploaded image. The variations might include the original image at different angles, a different style of art, or a different art subject in the same style.

According to the Open AI website, DALL-E 2 has three main outcomes:

- Helping people express themselves visually in ways they may not have been able to before

- From a research perspective, AI generated images help us understand whether the AI actually understands what we’re telling it or of it’s just repeating what it’s been taught

- In general, DALL-E helps people understand how AI systems see and understand our world, which is a critical part of developing AI that’s useful and safe

Limitations of DALL-E

As with any AI system, it’s only as good as its training data. If it’s taught with images that are incorrectly labeled, such as an image of an airplane labeled as a car, and then someone tries to generate AI art featuring a car, DALL-E may create a plane. It’s like talking to someone who learned the wrong word for something.

In the same way, if you try to give DALL-E a prompt it hasn’t learned yet, it will give you its best guess at what that is. For instance, if it knows what a baboon is, it’ll give you images of a baboon. But if you type in howling monkey, and it doesn’t know what a howling monkey is, it’ll give you a guess.

Safety Mitigations

Open AI has implemented several initiatives to deploy this AI responsibly.

Explicit Content

In order to prevent harmful image generations, they removed explicit content from their training data to minimize DALL-E 2’s exposure to violent, hateful, or adult images.

Privacy Protection

Open AI has implemented advanced techniques to precent photorealistic generations of real individual’s faces. Including public figures.

Preventing Misuse

The Open AI content policy does not allow users to generate AI art with violent, adult, political content, or other types of image generation. If their filters identify text prompts and image uploads that violate their policies, the system will not generate AI art. There are both automated and human monitoring mechanisms in place to guard against misuse.

Phased Deployment

Rather than releasing the tool immediately, Open AI started with a limited number of trusted users to test out the tool. As they learned more, they started adding more users and eventually released the beta version of DALL-E 2 in July 2022.

Midjourney

Midjourney is an self-funded, independent research lab that “explores new mediums of thought and expands the imaginative powers of the human species.” The founder, David Holz, told The Register that their company is different than a startup or a typical research lab, where they’re required to make a certain amount of profit to pay back investments. Their motivation is to have fun and create technology people enjoy.

Midjourney’s technology works in much the same way as DALL-E’s, although they do not use the same machine learning models. Midjourney is trained on billions of images and text descriptors to then learn how to generate AI images. Holz noted that one of the key differences between the training data they used and the data that DALL-E uses is beauty. He shared with The Register that when they were optimizing the data, they wanted the output to look beautiful. He notes that beautiful doesn’t necessarily mean realistic.

Midjourney has a huge community associated with it. You can access Midjourney one of two ways: through their website or through their Discord community. The prompt Midjourney uses is “imagine.” So you write, imagine an abstract field of flowers and Midjourney will create some variations for you.

Version 3 of Midjourney did not incorporate new art into its training data. Instead, it took data about how the users used the tool and what they liked to inform the improvements.

Safety Mitigations

Midjourney’s content policy is less extensive than DALL-E 2, however Midjourney’s Terms of Service state: “No adult content or gore. Please avoid making visually shocking or disturbing content. We will block some text inputs automatically.”

Midjourney also has about 40 moderators managing the community to make sure the content fits their guidelines.

Stable Diffusion

Another AI Art generator model is Stable Diffusion, created by Stability AI, a company that funds a number of different AI projects. Stable Diffusion openly discloses that their image dataset comes from the LAION dataset, which was a general crawl of the internet created by a German charity. Their website states that the images included are “intended to be a general representation of the language-image connection of the internet.”

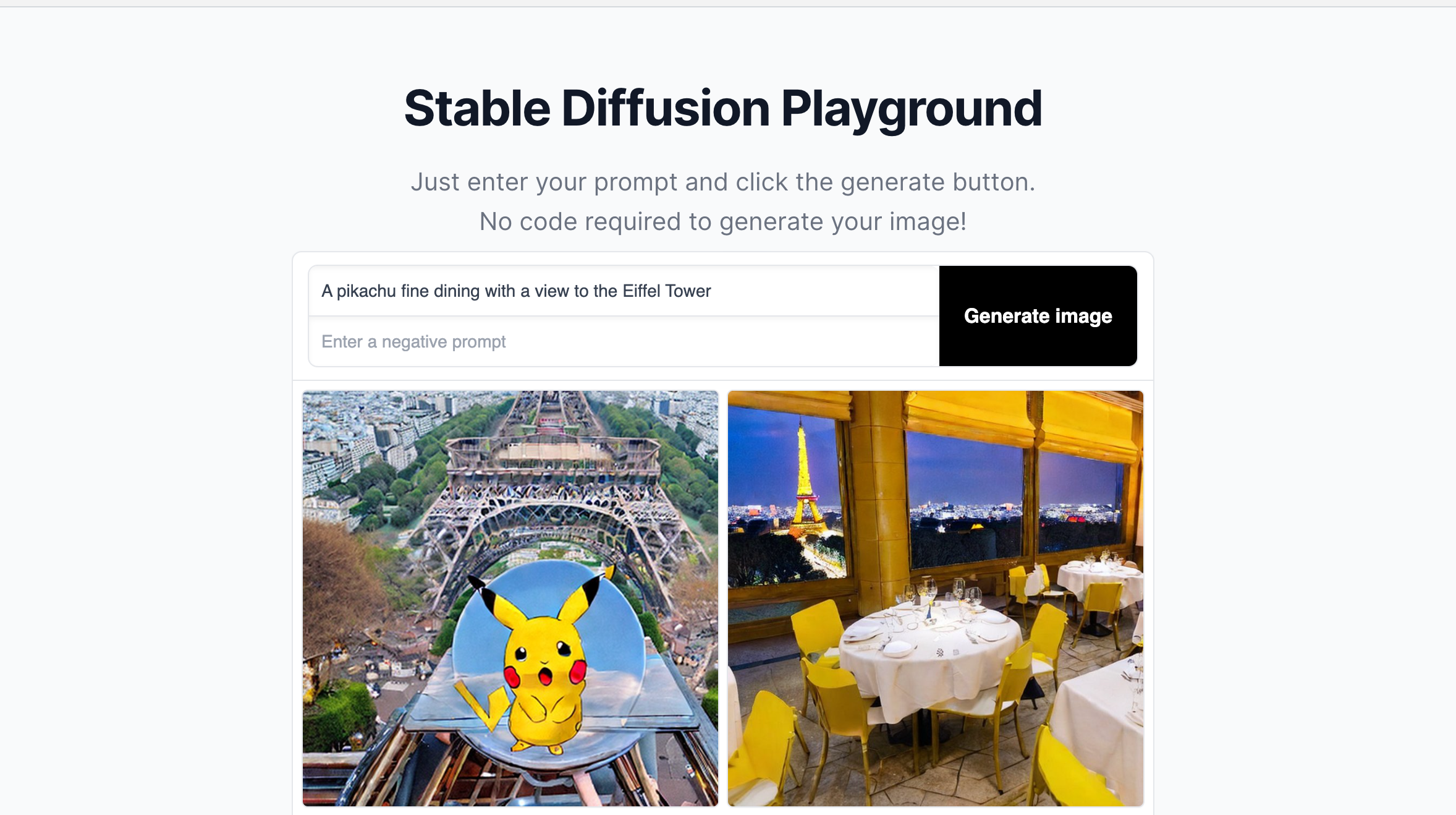

It’s easy to get started with using Stable Diffusion. They offer a “playground” on their website where you can try out some different prompts. I tried one of their sample prompts, “A Pikachu fine dining with a view to the Eiffel Tower.” My results were…strange. It could benefit from some user testing.

Like with any AI though, it’s important to fine tune your prompts to get it generate what you want. To get better at writing good prompts, you might want to look into prompt engineering courses.

Stable Diffusion claims that they don’t collect or use any personal information, nor do they store your text or images.

Unlike the other two AI art generator machine learning models mentioned, Stable Diffusion places no limitations what you enter. It’s also completely free to use. So once the tool became open source, it didn’t take long for people to start using it to create AI generated porn. One of these AI porn generators played on the name Stable Diffusion and called their AI porn generator Unstable Diffusion.

The popular app, Lensa AI, is built using Stable Diffusion’s model.

For an AI art generator no restrictions option, Stable Diffusion might be your closest bet.

Advocates of AI Generated Art

As one user says: “I love it so much because I love art so much. And you literally gave me a way to be an artist. Where I did not have that before…”

Many people have an artistic vision. Something that they’d like to create. However, they don’t have the artistic skills to make that vision come to life and create art.

A power user of both the Stable Diffusion and Midjourney models loves comic books and graphic novels, and one day dreamed of making his own. Before AI generated art, doing it on his own would have take years. Now with these tools, it might be possible for him to create realistic images sooner.

Art with AI enables that for those people.

Controversies around AI art generation

While AI art generators are extremely exciting to many, others have deep concerns about them. Many concerns involve where the data is coming from. Other concerns involve whether these tools will replace artists.

Data privacy violations

Many questions have come up around where the developers of these AI art generator tools find the images used to train the data. There have been instances of direct data privacy violations where private medical imagery has been used in these training datasets.

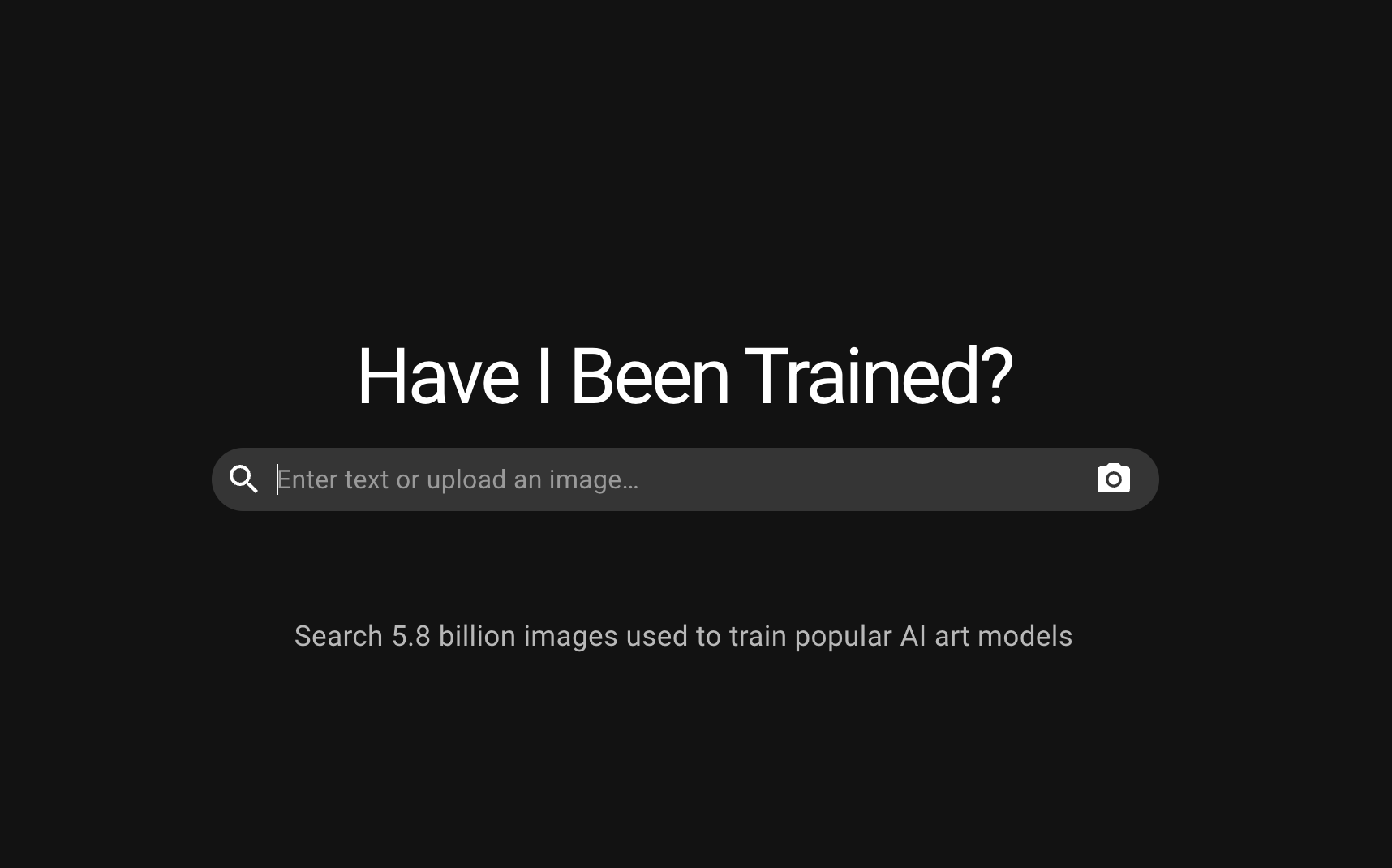

A tool called Have I Been Trained, which we’ll talk a bit more about later on, allows people to search and find out if their artwork or likeness has been included in the data used to train the AI image generator tools.

Lapine, a California based artist, uploaded a recent photo of herself using the tool’s upload an image feature. She was shocked to discover a set of medical photos of her face, which she’d only authorized for private use by her doctor. Well, it turns out that her doctor had passed away and somehow these images medical images left his practice’s custody.

As the artist says, “I signed a consent form for my doctor – not for a dataset.”

🚩My face is in the #LAION dataset. In 2013 a doctor photographed my face as part of clinical documentation. He died in 2018 and somehow that image ended up somewhere online and then ended up in the dataset- the image that I signed a consent form for my doctor- not for a dataset. pic.twitter.com/TrvjdZtyjD

— Lapine (@LapineDeLaTerre) September 16, 2022

Of course, a big question is how did these images end up online anyway? Can we fault the developer for not looking at every single image pulled into the dataset? Many would say that the people creating these tools have an obligation to take responsibility.

Copyright violations

Beyond the issue of data privacy comes the issue of copyright and perceived ownership of the work.

Many of the art generated by these AI art generator tools fall under the 3D art style because a lot of the data used to train them comes from classical art. When an artist has long since passed away, their work is seen to be under public domain.

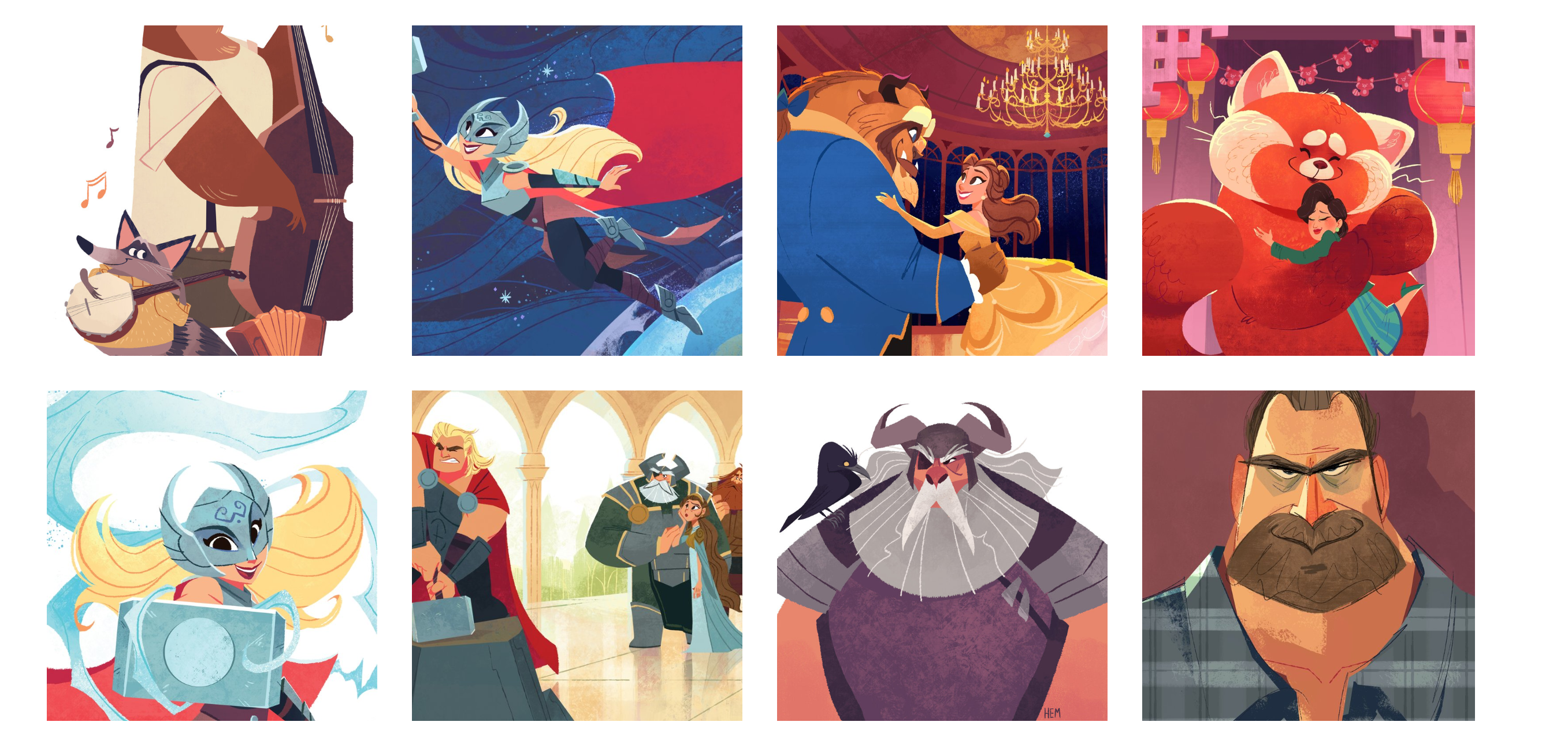

A Redditor pointed out that “2D illustration styles are scarce,” which prompted them to create a model inspired by Hollie Mengert, an artist with a very expressive 2D art style.

The following is a picture of the generated images created by the AI image generator.

The following is a screenshot of portfolio pieces on Hollie Mengert’s website.

You can see the similarities between the two sets of images, but there are also distinct differences.

Hollie Mengert expressed concerns in an interview with Waxy. Namely, she noted that many images given to the AI had been paid projects she’d done for clients like Disney and Penguin Random House. She pointed out that they’d paid for her to create those images and, therefore, they own those images. Mengert only posts those images publicly when they have given her permission, so she asserted that no one else should be able to use these images without the permission of those companies.

However, a counter-argument that has been made numerous times, including on the original Reddit thread, that you can’t copyright a style. That has been evident across the ages with art. Artistic imitations of art styles constantly happen, with or without the artist’s permission.

It’s very complicated issue. If you want to learn more, the Verge released a very detailed article about AI copyright and how no one knows what will happen next.

Replacing artists

While AI writing tools can’t quite replace humans, artists around the world claim that AI art generators have already been replacing them.

As one of many examples, artist Karla Ortiz pointed out on Twitter that the San Francisco ballet used an AI art generator to generate an illustration for a poster.

A few enthusiastic but misinformed AI tech writers: Ai MeDiA WoN’t TaKe ArTisT’s JoB

— Karla Ortiz 🐀 (@kortizart) December 2, 2022

Meanwhile the @sfballet to potentially use Midjourney to generate an illustration for a poster. Folks, that WAS someone’s job!! https://t.co/JvUQ2DJMjX pic.twitter.com/GSdb68wfgt

As she points out, that used to be someone’s job.

Correction the image is 100% AI. 😫 pic.twitter.com/6DflPqLCFG

— Karla Ortiz 🐀 (@kortizart) December 2, 2022

Strategies to mitigate unethical data usage

Empowering the Artists

Not all artists are against AI art generation. However, many recognize and understand the concerns associated with the violations involved with using private medical imagery or images without proper consent or credits. A couple based in Berlin has created Spawning, a company building tools for artists to take ownership of their data. It will allow them to opt-in or opt-out of their work being used in training large AI models.

Artists could even set permissions on how their style and likeness is used. If an artist so chooses, they could offer their own art generator models to the public. An artist may want to take the “permissive IP” approach do that so that they might willing allow their art to be used to create AI generated art in exchange for a return for a share in profits.

The primary purpose of Spawning, however, is to establish a standard of consent that the groups developing AI art generators adhere to.

If artists would like to find out if their art has been used, they can use the tool created by Spawning, Have I Been Trained to find out. Similarly, anyone can use the Have I Been Trained tool to find out if their picture or likeness is currently in these popular AI art training datasets.

Many arguments against AI art generation argue that using an artists’ art without their permission is a violation of their copyright. Spawning believes that “copyright is an outdated system that is a bad fit for the AI era.” Instead, they claim that the best way is to allow individuals to manage their preferences as far as style, likeness, and use of their art. The future, they believe, is allowing individual artists to determine their own comfort level with this changing technological landscape.

Therefore, if you’re an artist and you choose to participate in willingly allowing your art to be part of the datasets training an AI art generator, you should be able to be paid for your contributions. If you’re interested, definitely check Spawning out!

Possible Legal Actions

The Federal Trade Commission has now begun demanding algorithmic destruction to companies that have built their machine learning models using personal information to create images. For example, WW International (formerly known as Weight Watchers) built a healthy eating app for kids called Kurbo using data from children as young as 8 without parental permission. The FTC fined WW International $1.5 million and ordered them to delete the illegally collected data.

Given that these art generator machine learning models have been developed using sensitive data and copyrighted works, it’s possible that the FTC might fine some of these companies that use unethical training data.

Final thoughts

AI image generation is already here, and it’ll be here to stay. For many, this is a complex issue that raises many concerns. For others, it’s a new empowerment tool that enables a new form of artistic creation. The applications are endless from novel cover creation to flyers to posters. We also have a list of best AI video generators.